Uncertainty quantification is essential for deploying deep learning in safety-critical applications such as autonomous systems, medicine, and scientific discovery yet existing methods sacrifice accuracy through parametric approximations.

We develop AdamMCMC, a novel Markov Chain Monte Carlo algorithm that bridges stochastic optimization and Bayesian inference. AdamMCMC wraps the popular Adam optimizer inside a Metropolis Adjusted Langevin Algorithm (MALA) framework, using a *prolate proposal distribution* aligned with the Adam update direction. This ensures high acceptance rates for common deep learning step sizes, while the Metropolis-Hastings correction guarantees convergence to the exact Gibbs posterior.

We cover the following aspects:

- Theory: Because AdamMCMC uses a Metropolis-Hastings correction, the chain converges to the exact Gibbs posterior — unlike stochastic gradient methods that only approximate it at finite step sizes. We characterize this convergence in total variation distance.

- Scaling: The algorithm is adapted for multi-GPU training via Distributed Data Parallel (DDP), adding only ~15% training time overhead compared to standard Adam at 8 GPUs.

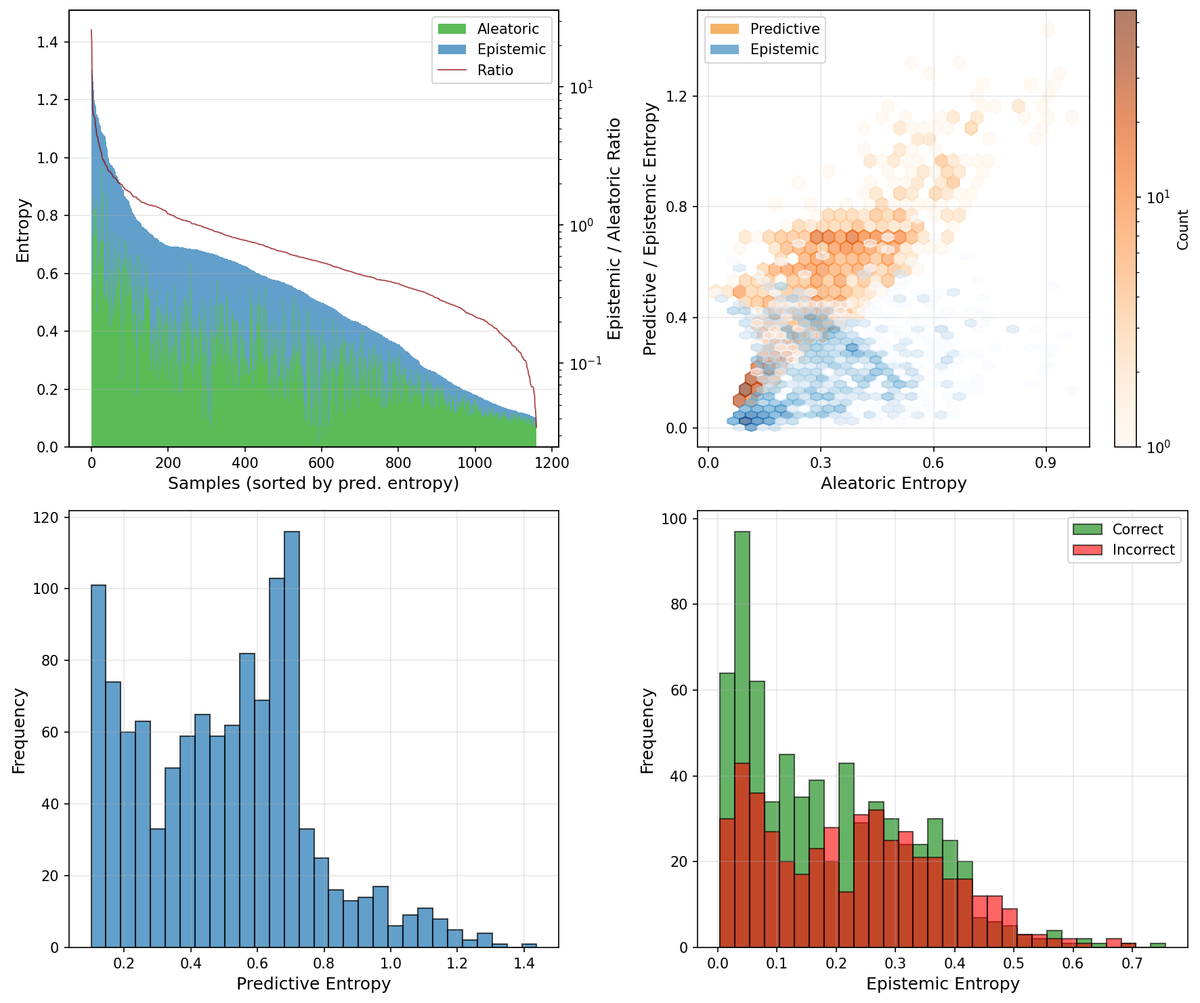

- Uncertainty disentanglement: We apply AdamMCMC to a large-scale benchmark that separates aleatoric (data) from epistemic (model) uncertainty. The results suggest that how flat the loss landscape is around a minimum plays an important role in how well a method can disentangle the two uncertainty types.

AdamMCMC makes principled Bayesian inference over neural network weights practical at scale, offering a drop-in replacement for Adam with rigorous uncertainty estimates.