Perception and Scene Understanding

Group Leaders: Dr. rer. nat. Martin Lauer and Dr. Carlos Fernández López

One of the largest and most important areas of research in the context of autonomous driving is the domain of perception and scene understanding. In an abstract sense this domain contains all steps that are part of creating an environment model that can afterwards be used for planning the behavior of an autonomous car. This begins with the automated processing of all data perceived by the car's sensors like camera-images, RADAR-measurements or point clouds collected by a LiDAR. The processed data is coreferenced with each other based on a calibration between sensors and afterwads fused into one common environment model. Using techniques and algorithms from the domain of pattern recognition and machine learning it is even possible to accurately classify observed objects, traffic participants and elements from the environment to a defined set of classes and predict their behavior several seconds into the future.

Our Divide-and-Merge approach for end-to-end autonomous driving separates motion and semantic learning to avoid negative transfer and improve perception, prediction, and planning.

More

We generate high-fidelity driving scenes from simulators. This provides realistic data for training autonomous driving stacks and enables closed-loop end-to-end evaluation.

More

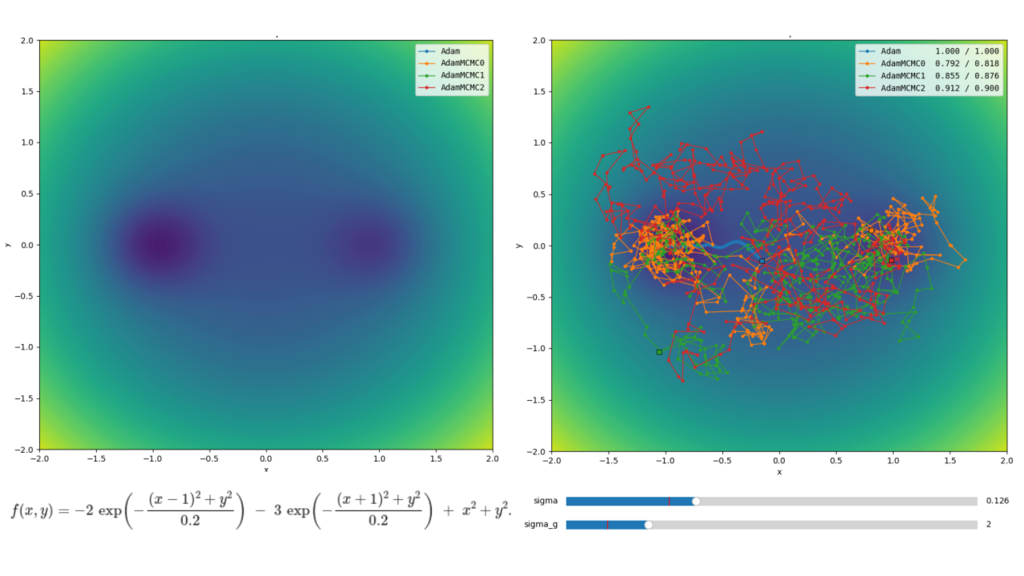

We combine the Adam optimizer with Metropolis-Hastings corrections to efficiently sample neural network weight posteriors, enabling principled uncertainty quantification.

More

Methods for analyzing visual datasets in autonomous driving by automatically describing differences between image subsets.

More