Self-supervised learning

In self-supervised learning, supervisory signals are generated from unlabled data via pretext tasks. In a self-driving vehicle, the sensors, such as cameras and LiDAR, can be utilized to create training data without human intervention. By leveraging the vast amount of unlabeled data generated during normal driving, self-supervised learning algorithms can extract meaningful features and representations from the raw sensor inputs. Therefore, self-supervised learning can play a crucial role in improving the perception and decision-making capabilities of self-driving cars, contributing to their safety and reliability.

Environment Perception Under Low-Visibility Condition

Automated vehicles must be capable of precisely perceiving the environment despite low-visibility conditions. We analyze the effects of various visibility conditions (darkness, glare, rain, snow, fog) on the results of environment perception algorithms. Our aim is to develop novel preprocessing and domain adaptation approaches to improve the cross-domain robustness of object detection and tracking methods.

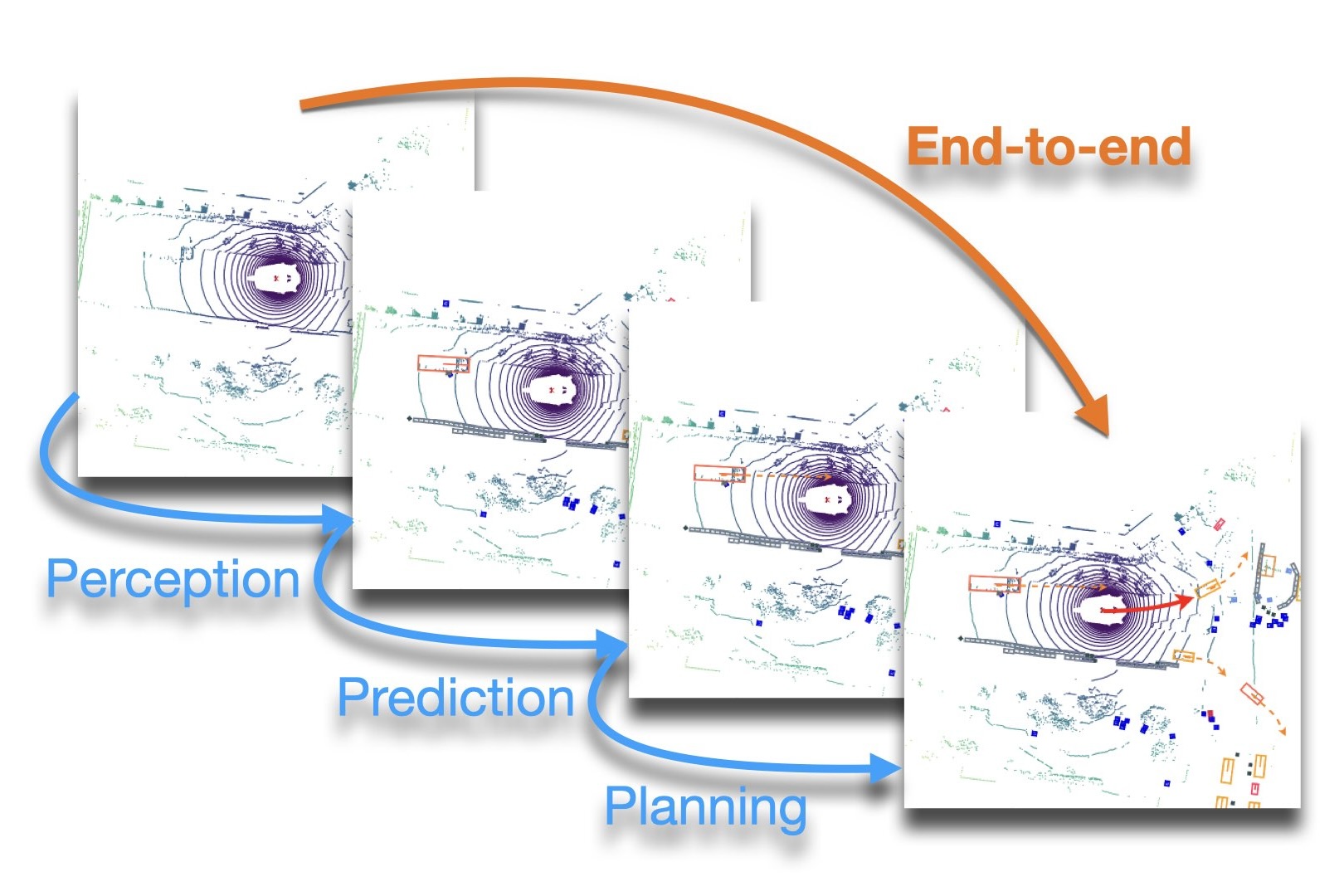

End-to-end perception and prediction

Object tracking and trajectory prediction are closely related components in the autonomous driving pipeline, which have been researched independently in past years. However, in the inference time, errors from the tracking module will reduce the prediction quality, called cascading error problem. A promising solution is end-to-end perception and prediction. Furthermore, the prediction module can conversely assist the tracking module to obtain better tracking performance. For the entire autonomous driving pipeline, the problem is even more severe. An end-to-end autonomous driving system will be investigated in the future.

Conditional behavior prediction

Recently, the focus of traffic agent position prediction has shifted from implicitly predicting individual agents independent from their surrounding to predicting agents conditioned on the behavior of surrounding agents. We propose to predict pair-wise distributions for all pairs in a scene. Then, marginal distributions for individual agents can be derived from those combined distributions if necessary, but primarily, those combined distributions can directly be analyzed for conditioned behavior modes.

Probabilistic pedestrian prediction

In order to correctly react on people's behavior, a prediction of their probably future positions is needed. In this video you see how pedestrians could be predicted using an artificial neural network that was trained with trajectories of humans in traffic scenes. The green circles are corresponding to the minimum prediction horizon of 1 second, the red circles are corresponding the maximum prediction horizon of 4 seconds. With this prediction the ego vehicle can now brake for pedestrians even if they did not start crossing the road, yet.

Simultaneous object tracking and shape estimation

Traditional object tracking methods approximate vehicle geometry using simple bounding boxes. We develop methods that simultaneously estimate object motion and reconstruct detailed object shape using laser scanners. The resulting shape information has benefits for the tracking process itself, but can also be vital for subsequent processing steps such as trajectory planning in evasive steering.

Vehicle Prediction

Since most interactions with other traffic participants occur between vehicles, the task of vehicle behavior prediction is a core task of scene understanding. To complete this task it is necessary to process all available information about the surroundings including but not limited to: Recognized obstacles, road geometry, other traffic participants, drivable area and traffic rules that are applicable to the current situation. The multitude of information necessary as well as the complexity of potential interactions between vehicles present a difficulty challenge in the prediction process.

Realtime Environment Perception with Range Sensors

We aim to find algorithms to process observations from range sensors in real-time. Here, we make the assumption that the motion of traffic participants can be estimated w.r.t. to a common ground surface. We investigate methods to transfer range sensor observations from 3D space to the ground surface. The ground surface itself can be expressed as a dense 2D grid map with each cell referencing to 3D points. With this method, fast algorithms can be applied within the dense grid representation and easily transferred back to the 3D domain.

Robust visual tracking in traffic scenarios

Object tracking is an essential part for behavior analysis and trajectory prediction in autonomous driving. Vision-based devices are able to provide rich information about observed environments.

Our aim is to develop novel approaches to track objects in light of powerful image features, which can deal with challenging scenarios such as occlusions and deteriorated visual conditions.

Trajectory Estimation with Visual Odometry for Micro Mobilty Applications

Visual Odometry (VO) supplies the movement of a camera between two frames by analyzing the displacement between correlating feature points. Having a time series taken from a driving system, the driven trajectory as well as the velocity can be estimated iteratively. We stabilize the trajectory estimation by a ground plane and horizon estimation based on Time-of-Flight camera data. Using an implementation aiming for efficiency, it is possible to run the algorithm on low-cost hardware (e.g. Raspberry Pi) in real time and thus, making it applicable for micro mobility applications like electric scooter.

Mapless Driving Based on Precise Lane Topology Estimation and Criticality Assessment

Modern ADAS systems rely on highly accurate maps that may become outdated very quickly due to contructions and changes in the routing system. These cases will result in unexpected behavior, if no fallback algorithms exist, that are able to navigate without a precise map. This project aims at such situations and extracts the lane geometry only based on the sensor system (e.g. laser or camera). The current focus lies on applying machine learning algorithms for directly estimating the topology from camera images. This scene model is used for trajectory planning which explicitly incorporates observed trajectories, perception and estimation uncertainties and occlusions.

Continuous Trajectory Bundle Adjustment with Spinning Range Sensors

We investigate the calibration of multiple spinning range sensors as well as the Simultaneous Localization and Mapping (SLAM) problem and aim to optimize calibration, map and map-relative pose together. For spinning range sensors, measurements are not generated at the same time. In addition, high-rate IMU systems make the optimization problem ill-conditioned when modeled in discrete state space. By modeling the trajectory as a continuous function, we can handle this property and model a large set of different acquisition times.

Stereo Based Environment Perception

Cameras are an essential component for receiving information about the environment of autonomous vehicles. With two cameras in a stereo setup it is possible to compute depth information of the perceived scene, which then can be used together with semantic information to estimate the position and dimension of all types of road users around your vehicle.

Pixel-wise image labeling with Convolutional Neural Networks

Camera images contain valuable information about the environment for autonomous cars. Some of this information cannot be acquired from other sensors. We use deep learning and especially Convolutional Neural Networks to extract information like class labels and depth for each pixel.

Visual Odometry With Non-Overlapping Multi-Camera-Systems

Visual odometry is a crucial component of autonomous driving systems. It supplies the driven trajectory in a dead-reckoning sense, which can then be used for global localization or behaviour generation. In order to robustify the estimation sensor setups with multiple cameras are in my particular interest, yielding consistent trajectories over several kilometers with very low drift.

For released code visit us on Github https://github.com/KIT-MRT